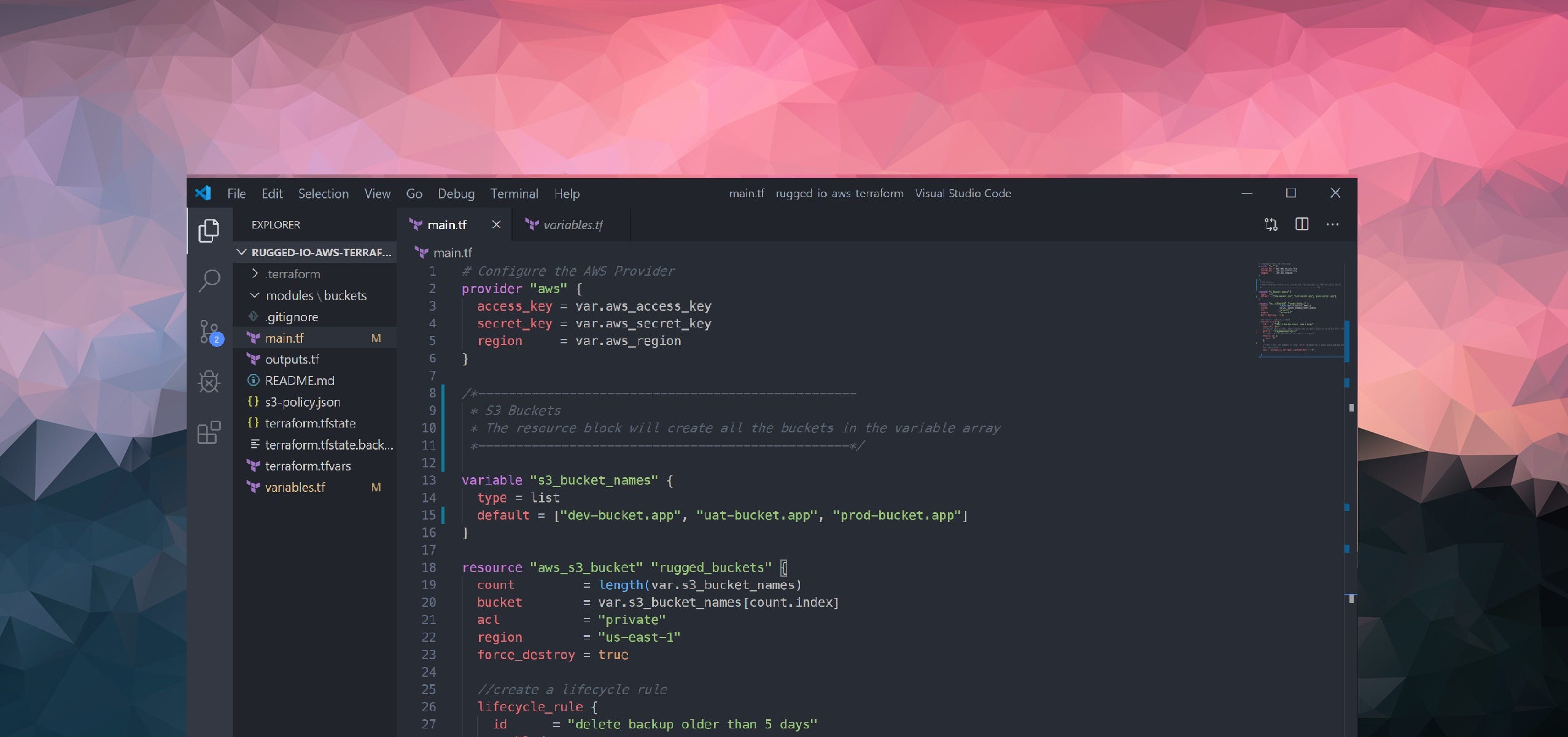

Terraform tips: How to create multiple AWS s3 buckets with a single resource config

Intro

When building and managing applications, having repeatable and automated processes can enable you to focus on productive and exiting workflows thereby making the entire experience a pleasure to go through.

I run about 10 SaaS apps and 2 Serverless sites that require an S3 bucket for storage. These 12 s3 buckets have the same lifecycle rules and bucket configs that I managed manually for a couple of years.

Creating multiple S3 buckets with Terraform should be a really simple thing if you don’t mind unstructured and unmanageable code. Just set your “provider” configs and create a “resource” tag for each of your buckets. So if you have 12 s3 buckets, you’d need 12 resource tags that do the exact same thing. This method is a dirty way to write your HCL code and would actually result in more work than actually managing these directly.

So as part of the Cloud automation plan that I've been developing for Rugged I/O, I’ve begun with my s3 bucket automation.

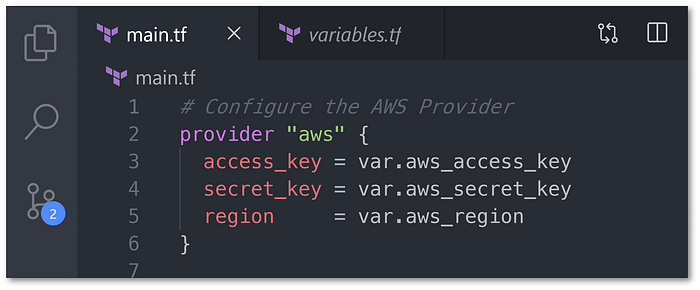

1. Setting up your Provider and variable

Set your AWS Access Key and Secret Key in the Provider Reference.

Note: Version 0.12+ of Terraform has a simpler Interpolation syntax for variable references

2. Create a single variable for the “s3_bucket_name”

Your “s3_bucket_name” variable will contain an array of all the bucket names you want. This array is passed in the default key.

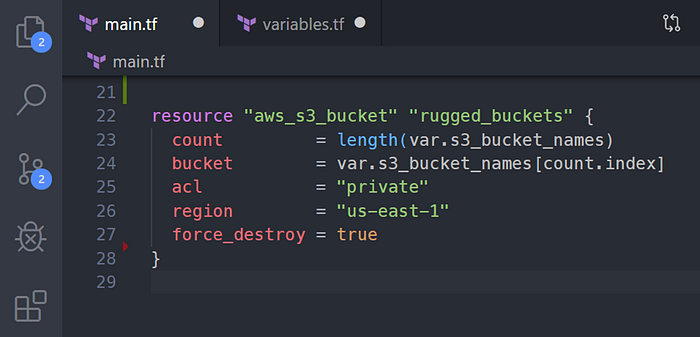

3. Create a single resource for “aws_s3_bucket”

We only need 1 resource tag that will iterate through the bucket names in the variable.

The “count” attribute calculates the buckets required from the “s3_bucket_names” variable you created.

The “bucket” attribute will contain the name of the bucket from the array in the “s3_bucket_names” variable.

The Complete Code

Variable File

This technique can be used for any reusable resources, not just s3 buckets, through a thoughtful combination of a simple variable assignment, resource count and naming prefixes.

Consequently, this abstracted method of resource building has reduced hundreds of lines of code and made the entire HCL readable and understandable.